|

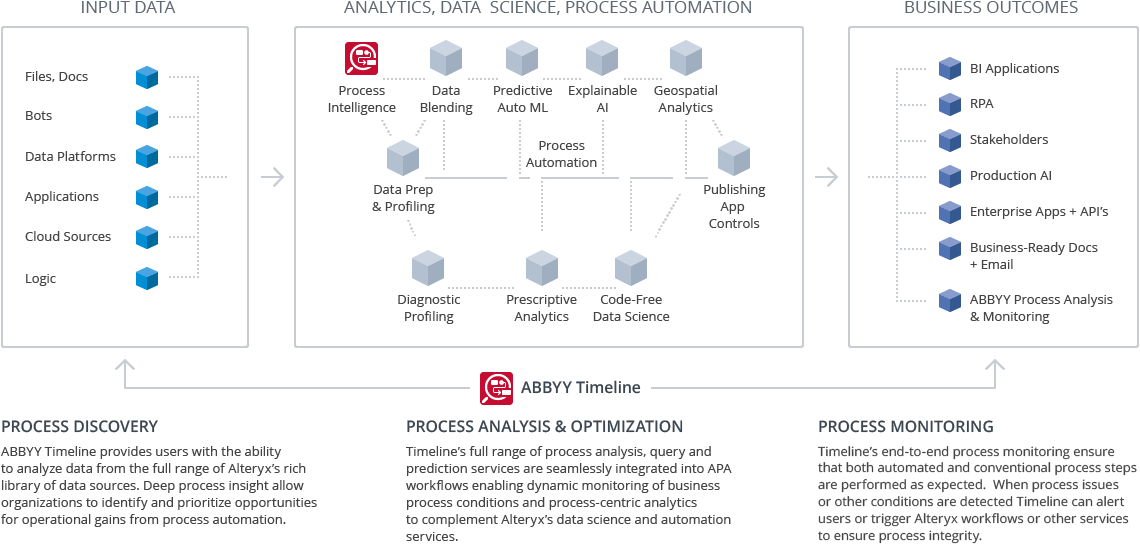

Alteryx Designer delivers a repeatable workflow for self-service data analytics that leads to deep insights in hours, not weeks Our data analysis software combines data preparation, data blending, & advanced statistical functions to find game changing insights.DOSBox for Mac is a DOS-emulator that uses the SDL-library which makes DOS Box very easy to port to different platforms. Tightened inspections in terms of sample size and pass/fail limits. It is an open source database management system, which supports various forms of data. If that doesn't suit you, our users have ranked more than 25 alternatives to Alteryx and 12 are available for Mac so hopefully you can find a suitable replacement.Mongodb batch size vs limit Batch size: Inserts were made using a batch size of 10,000 Additional database configurations: For TimescaleDB, we set the chunk size to 12 hours, resulting in 6 total chunks (learn more about chunks and chunk time here). The most popular Mac alternative is R (programming language), which is both free and Open Source. Alteryx is not available for Mac but there are plenty of alternatives that runs on macOS with similar functionality.

In a similar way that a “gap down” can work against you with a stop order to sell, a “gap up” can work in your favor in the case of a limit order to sell, as illustrated in the chart below. 32 bit Alteryx has a record size limit of 256MB. Besides other properties, FindOptions defines the batch size. The best open source alternative to Alteryx is R (programming language), which is both free and Open Source.If that doesn't suit you, our users have ranked more than 25 alternatives to Alteryx and four of them is open source so hopefully you can find a suitable replacement. Open Source Alteryx Alternatives. Sprinklr helps some of the biggest companies in the world reach, listen to, and engage with their customers across more than 25 different channels, all from one unified platform. In a similar way that a “gap down” can work against you with a stop order to sell, a “gap up” can work in your favor in the case of a limit order to sell, as illustrated in the chart below. 32 bit Alteryx has a record size limit of 256MB. Besides other properties, FindOptions defines the batch size. The best open source alternative to Alteryx is R (programming language), which is both free and Open Source.If that doesn't suit you, our users have ranked more than 25 alternatives to Alteryx and four of them is open source so hopefully you can find a suitable replacement. Open Source Alteryx Alternatives. Sprinklr helps some of the biggest companies in the world reach, listen to, and engage with their customers across more than 25 different channels, all from one unified platform.

Postgres provides data constraint and validation functions to help ensure that JSON documents are more meaningful: for example, preventing attempts to store. Zero Value¶ A limit() value of 0 (i. They might easily exceed the limit. Adopted Kafka batching + MongoDB BulkOperations for event processing 4. ) This behavior isn't explicitly described in the docs, so I wanted to check if it's desired. Correctly configuring the use of available memory resources is one of the most important things you have to get right with MySQL for optimal performance and stability. It gained popularity in the mid-2000s for its use in big data applications and also for the processing of unstructured data. Inserts and Upserts within Transactions ¶ For feature compatibility version (fcv) "4. MongoDB is a document-oriented database, which is a great feature itself. Raises ValueError if batch_size is less than 0. The steps are typically sequential, though as of Spring Batch 2. MongoDB, in its default configuration, will use will use the larger of either 256 MB or ½ of (ram – 1 GB) for its cache size. In this blog post, we’ll discuss some of the best practices for configuring optimal MySQL memory usage. These changes improved performance by up to 63% on some I/O-intensive benchmarks. Limit(0)) is equivalent to setting no limit. This article was a quick but comprehensive introduction to using MongoDB with Spring Data, both via the MongoTemplate API as well as making use of MongoRepository. 7) MongoDB vs RDMS a) Collection vs Table b) Document vs Rows c) Field vs Column I assume the max size of any dimension cannot grow over 10,000. Get greater than 0 mongodb. MongoDB stores data in BSON (Binary JSON) format, supports a dynamic schema and allows for dynamic queries. 0 that featured WiredTiger storage engine, better replica member limit of over 50, pluggable engine API, as well as security improvements. 2 server version) 15 seconds: Maximum execution time for MongoDB operations(for 3. YCSB was configured with 16 threads, and the “uniform” read only workload. You can also enter a connection string, click the "connect with a connection string" link and. The API is exposed through the DataRobot Public API and can be consumed using either any REST-enabled client or the DataRobot Python Public API bindings. 4 Batch jobs that haven’t started yet remain in the queue until they’re started. Raises TypeError if batch_size is not an integer. To solve this, we batch documents by size: To solve this, we batch documents by size: There are differences, though: MongoDB limits its BSON format to a maximum of 64 bits for representing an integer or floating point number. MongoDB has a well-respected hosted offering, Atlas, that will soon become the majority of the company’s revenue. Details of the DevOps dataset used to benchmark both write (ingest) and read (query) performance for MongoDB and TimescaleDB. My understanding is the Maximum trigger size is 200. 6 results in operations being batched driver side in chunks of 1000. CreateCollection ( " JavaTpoint " ) ALTER TABLE JavaTpoint ADD join_date DATETIME. The behavior of limit() is undefined for values less than -2 31 and greater than 2 31. The reason for this behavior is that the data stored in MongoDB are in GeoJson format and each record consist of many extra characters and a unique auto created id called ObjectId. 6, MongoDB will not create an index if the value of existing index field exceeds the index key limit. Put simply, the batch size is the number of samples that will be passed through to the network at one time. In most cases, modifying the batch size will not affect the user or the application. To limit the records in MongoDB, you need to use limit() method. A limit order offers the advantage of being assured the market entry or exit point is at least as good as the specified price. Mac os x theme for google chromeMongoDB insertMany() document size | How to check maxWriteBatchSize in MongoDB Augsarpanch Leave a comment insertMany() function in MongoDB accepts array of objects and can insert multiple documents in the collections. The oven can hold 12 pans (maximum operation batch size is 12), and all the cakes must be put in the oven at the same time. A 250 KB limit on the size of the compiled ruleset that results when Firebase processes the source and makes it active on the back-end. Find() method displays the documents in a non structured format but to display the results in a formatted way, the pretty() method can be used. A primary difference between MongoDB and Hadoop is that MongoDB is actually a database, while Hadoop is a collection of different software components that create a data processing framework. There is an_id field in MongoDB for each document. The read limit based solely on the size of the disk is 30,000 IOPS. , execute step 2 if step 1 succeeds otherwise execute step 3). Hence, we have seen the complete Hadoop vs MongoDB with advantages and disadvantages to prove the best tool for Big Data. Powered by a free Atlassian Jira open source license for MongoDB. BLOB and TEXT columns only contribute 9 to 12 bytes toward the row size limit because their contents. Alteryx Free Clusters OnlyYou can set cache size in megabytes. Applicable for Atlas Free Clusters Only. MongoDB is a document-oriented database that only supports document data modeling. Parameters : batch_size: The size of. As you can see, the width of the data type (32 bit vs 64 bit) matters a lot more than the type (float vs integer). Another metric ClickHouse reports is the processing speed in GB/sec. Usually, a number that can be divided into the total dataset size. The best explanation I've seen is that, Max Insert Commit Size is equivalent to BATCHSIZE argument in the BULK INSERT command. Small batches go through the system more quickly and with less variability, which fosters faster learning. UPDATE Setting Up MongoDB cache size in MB. Lauch file from command line vscoded. Within any given step, the basic process is as follows: read a bunch of “items” (e. It also manages namespaces, indexes, data structures. "Document-oriented storage" is the top reason why over 788 developers like MongoDB, while over 7 developers mention "MongoDB SaaS for and by Mongo, makes it so easy " as the leading cause for choosing MongoDB Atlas.

0 Comments

Leave a Reply. |

AuthorMaria ArchivesCategories |

RSS Feed

RSS Feed